Amid rising reports of underage users accessing social apps, and being exposed to harmful content, as well as online predators, social platforms are working to do more to restrict access by youngsters to limit such threats.

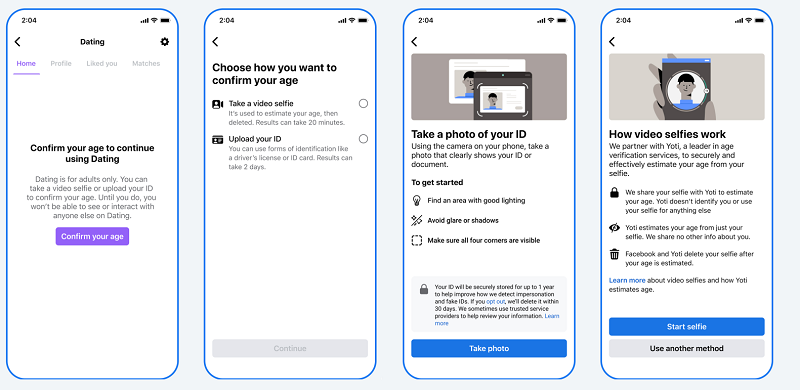

Today, Meta has taken another step on this front, with the expansion of its age verification tools, powered by Yoti, to Facebook Dating in the US.

As per Meta:

“We require people to be at least 18 years old in order to sign up for and access Facebook Dating, and age verification tools will help verify that only adults are using the service and help prevent minors from accessing it.”

The expansion of age verification to Facebook Dating builds on Meta’s relationship with Yoti, which it first announced back in June, to verify Instagram user ages.

As outlined in the video, Yoti’s system is trained on a huge dataset of anonymous images of a diverse range of people from around the world. Based on this, Yoti’s process is able to accurately estimate a person’s age from a video selfie by referencing a range of parameters.

Using video for age detection is becoming increasingly accurate, and Meta will now utilize age checking across Facebook, Instagram and Facebook Dating – which it says has already helped it detect and remove many underage users.

“Since we began testing new age verification tools on Instagram in June, we’ve found that approximately four times as many people were more likely to complete our age verification requirements (when attempting to edit their date of birth from under 18 to over 18), equating to hundreds of thousands of people being placed in experiences appropriate for their age.”

In addition to this, users will also now be able to upload a form of identification to verify their age.

“After you upload a copy of your ID, it’ll be encrypted and stored securely, and won’t be visible on your Facebook profile or to other people on the app. Once your age has been verified, you can manage how long your ID is saved for.”

In combination, that will provide Meta with a whole new toolset to help stop underage users from accessing its apps and offerings, which, as noted, has become a bigger focus amid concerning reports of kids getting lured into dangerous elements of the web.

Last week, Bloomberg published a harrowing report on the rising number of children who’ve died as a result of dangerous challenges that have circulated on TikTok. Kids under the age of 13 are not supposed to be able to access TikTok’s main app, but as Bloomberg reports, many kids simply lie about their age to circumvent lax security and access measures.

In the report, Bloomberg notes that TikTok has also considered video ID options, but concerns with personal data collection, and the potential for such to be shared with its Chinese parent company, have left it hesitant to move on this.

TikTok has noted, however, that it already removes many accounts which attempt to cheat its age rules. TikTok says that it took down 41 million accounts for such in the first half of this year alone, which further highlights the scope of the problem, and the need for more action on this front.

Twitter, under new owner Elon Musk, is also in the midst of a new push to verify human users, which is less focused on age-gating, and more on defeating bots in the app. But again, video ID could play a role here, and while no system is foolproof, the fact that the user needs to upload various selfie images does make it a lot harder to cheat.

Which could be a big step, and as such, it’s good to see Meta looking to expand its use of video ID into more areas, which could have a big impact on this critical element.

Meta says that it plans to expand its age verification tools to more regions and elements soon.