Meta has released its latest community standards enforcement overview, which outlines how many violations of its rules that it’s detecting across its apps, and the subsequent actions taken as a result.

The report includes data on hate speech removals, restricted goods, fake accounts and more. You can read Meta’s full report here, but in this post, we’ll take a look at some of the key shifts in Q4 2022.

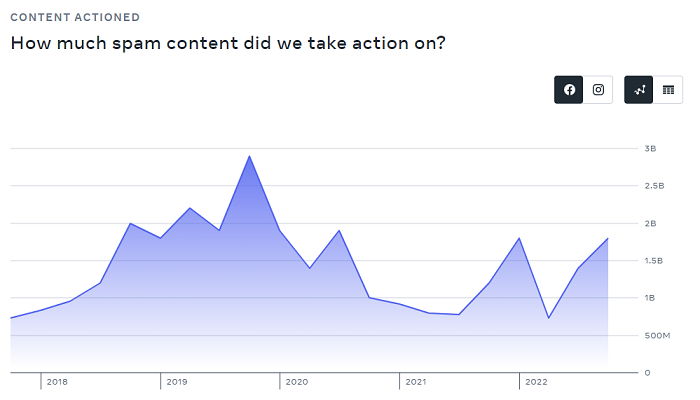

First off, on spam – Meta saw a slight uptick in spam content towards the end of last year, with 1.8 billion posts actioned in the period.

Meta says that this was due to an increased number of spam attacks in October, and that ’fluctuations in enforcement metrics for spam are expected due to the highly adversarial nature of this space’.

So if you were thinking you were seeing more junk on Facebook at the end of the year, you probably were. The majority of these removals were not appealed.

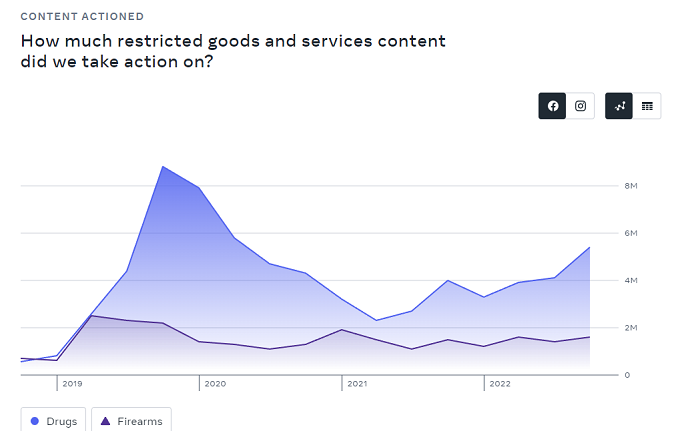

On restricted goods, Meta says that it actioned more content in Q4 due to improvements made to its proactive detection technology.

As you can see in this chart, Meta removed a lot more drug-related content in the period, which is reflective of its evolving tools. As opposed to spam, many of these increased removals were appealed by users.

Meta also notes that instances of violence and incitement decreased in Q4, as did suicide and self-injury content, again due to improved, proactive detection.

But this is a concern:

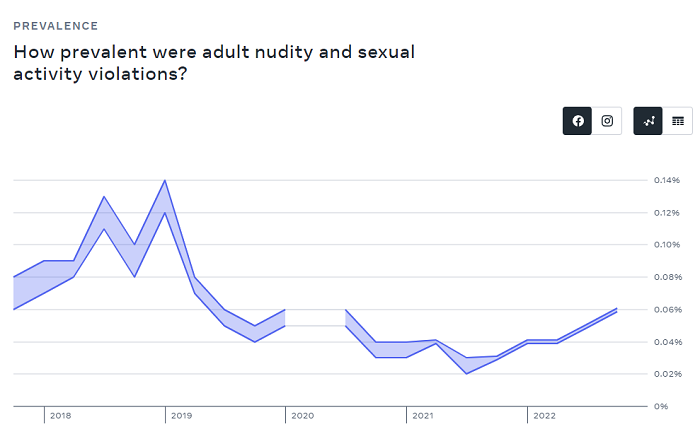

I mean, it’s a positive that Meta is now detecting more of this content, and taking action as a result, but the prevalence of such remains a key issue, and is something that all social platforms need to be working to address.

Meta also saw a slight uptick in violations of its nudity and sexual activity policies at the end of last year.

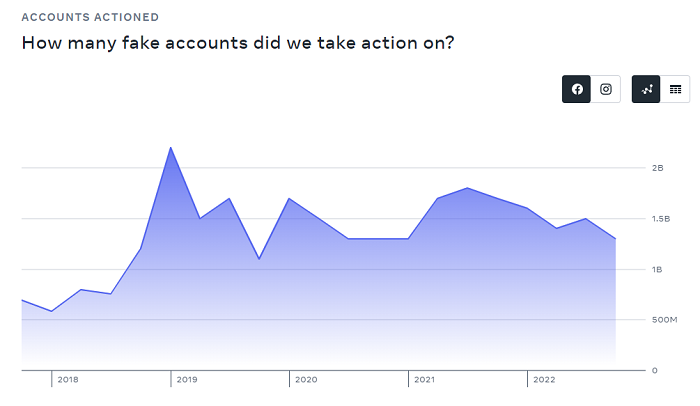

While action on fake accounts remains steady:

Meta continues to estimate that fake accounts represent around 4-5% of its worldwide monthly active user count on Facebook. Which, at 2.9 billion users, is still 148 million fake accounts, so there are a lot on there. But the base estimate essentially acknowledges that there will always be some fake accounts present. 5% is an expected level, and has remained the prediction for both Facebook and Twitter for years.

Overall, there are no big surprises in Meta’s latest update, but it is worth noting the various shifts and behavioral changes, and how Meta’s systems are evolving to detect such.

And there are some key concerns there – but in general, Meta’s systems are getting better at detecting such, which seems like a positive.

You can read Meta’s full community standards enforcement report here.