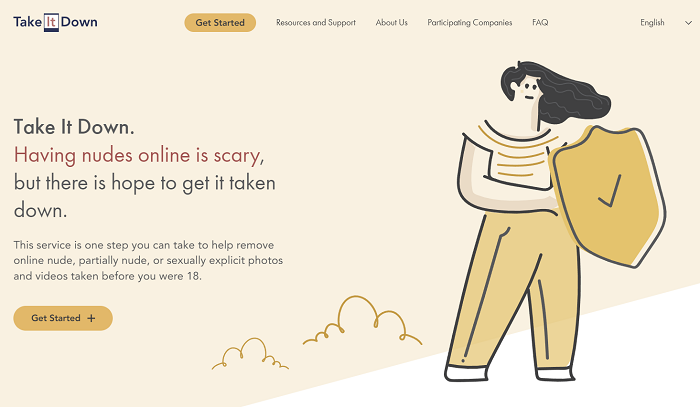

Meta has announced a new initiative to help young people avoid having their intimate photos distributed online, with both Instagram and Facebook joining the ‘Take It Down’ program, a new process created by the National Center for Missing and Exploited Children (NCMEC), which provides a way for youngsters to safely detect and action images of themselves on the web.

Take It Down enables users to create digital signatures of their images, which can then be used to search for copies online.

As explained by Meta:

“People can go to TakeItDown.NCMEC.org and follow the instructions to submit a case that will proactively search for their intimate images on participating apps. Take It Down assigns a unique hash value – a numerical code – to their image or video privately and directly from their own device. Once they submit the hash to NCMEC, companies like ours can use those hashes to find any copies of the image, take them down and prevent the content from being posted on our apps in the future.”

Meta says that the new program will enable both young people and parents to action concerns, providing more reassurance and safety, without compromising privacy by asking them to upload copies of their images, which could cause more angst.

Meta been working on a version of this program over the past two years, with the company launching an initial version of this detection system for European users back in 2021. Meta launched the first stage of the same with NCMEC last November, ahead of the school holidays, with this new announcement formalizing their partnership, and expanding the program to more users.

It’s the latest in Meta’s ever-expanding range of tools designed to protect young users, with the platform also defaulting youngsters into more stringent privacy settings, and limiting their capacity to make contact with ‘suspicious’ adults.

Of course, kids these days are increasingly tech-savvy, and can circumvent many of these rules. But even so, there are additional parental supervision and control options, and many people don’t switch from the defaults, even when they can.

Addressing the distribution of intimate images is a key concern for Meta, specifically, with research showing that, in 2020, the vast majority of online child exploitation reports shared with NCMEC were found on Facebook,

As per Daily Beast:

“According to new data from the NCMEC CyberTipline, over 20.3 million reported incidents [from Facebook] related to child pornography or trafficking (classified as “child sexual abuse material”). By contrast, Google cited 546,704 incidents, Twitter had 65,062, Snapchat reported 144,095, and TikTok found 22,692. Facebook accounted for nearly 95 percent of the 21.7 million reports across all platforms.”

Meta has continued to develop its systems to improve on this front, but its most recent Community Standards Enforcement Report did show an uptick in ‘child sexual exploitation’ removals, which Meta says was due to improved detection and ‘recovery of compromised accounts sharing violating content’.

Whatever the cause, the numbers show that this is a significant concern, which Meta needs to address, which is why it’s good to see the company partnering with NCMEC on this new initiative.

You can read more about the ‘Take It Down’ initiative here.