Meta’s Oversight Board has announced a change in approach, which will see it hear more cases, more quickly, enabling it to provide even more recommendations on policy changes and updates for Meta’s apps.

As explained by the Oversight Board:

“Since we started accepting appeals over two years ago, we have published 35 case decisions, covering issues from Russia’s invasion of Ukraine, to LGBTQI+ rights, as well as two policy advisory opinions. As part of this work, we have made 186 recommendations to Meta, many of which are already improving people’s experiences of Facebook and Instagram.”

In expansion of this, and in addition to its ongoing, in-depth work, the Oversight Board says that it will now also implement a new expedited review process, in order to provide more advice, and respond more quickly in situations with urgent real-world consequences.

“Meta will refer cases for expedited review, which our Co-Chairs will decide whether to accept or reject. When we accept an expedited case, we will announce this publicly. A panel of Board Members will then deliberate the case, and draft and approve a written decision. This will be published on our website as soon as possible. We have designed a new set of procedures to allow us to publish an expedited decision as soon as 48 hours after accepting a case, but in some cases it might take longer – up to 30 days.”

The board says that expedited decisions on whether to take down or leave up content will be binding on Meta.

In addition to this, the board will also now provide more insights into its various cases and decisions, via Summary Decisions.

“After our Case Selection Committee identifies a list of cases to consider for selection, Meta sometimes determines that its original decision on a post was incorrect, and reverses it. While we publish full decisions for a small number of these cases, the rest have only been briefly summarized in our quarterly transparency reports. We believe that these cases hold important lessons and can help Meta avoid making the same mistakes in the future. As such, our Case Selection Committee will select some of these cases to be reviewed as summary decisions.”

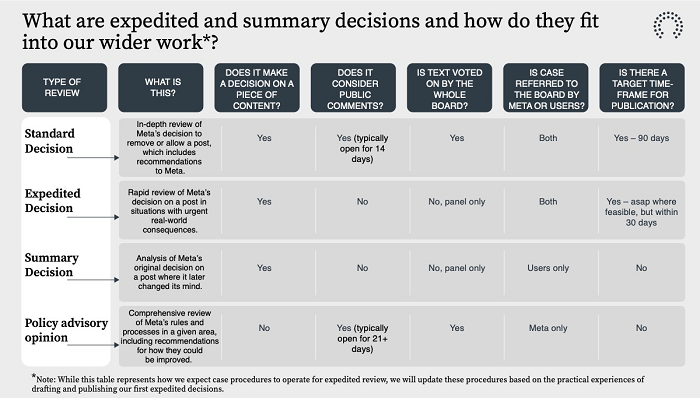

The Board’s new action timeframes are outlined in the table below.

That’ll see a lot more of Meta’s moderation calls double-checked, and more of its policies scrutinized, which will help to establish more workable, equitable approaches to similar cases in future.

Meta’s independent Oversight Board remains a fascinating case study in what social media regulation might look like, if there could ever be an agreed approach to content moderation that supersedes independent app decisions.

Ideally, that’s what we should be aiming for – rather than having management at Facebook, Instagram, Twitter, etc. all making calls on what is and is not acceptable in their apps, there should be an overarching, and ideally, global body, which reviews the tough calls and dictates what can and cannot be shared.

Because even the most staunch of free speech advocates know that there has to be some level of moderation. Criminal activity is, in general, the line in the sand that many point to, and that makes sense to a large degree, but there are also harms that can be amplified by social media platforms, which can cause real world impacts, despite not being illegal as such, and which current regulations are not fully equipped to mitigate. And ideally, it shouldn’t be Mark Zuckerberg and Elon Musk making the ultimate call on whether such is allowed or not.

Which is why the Oversight Board remains such an interesting project, and it’ll be interesting to see how this change in approach, in order to facilitate more, and faster decisions, affects its capacity to provide true independent perspective on these types of tough calls.

Really, all regulators should be looking at the Oversight Board example and considering if a similar body could be formed for all social apps, either in their region or via global agreement.

I suspect that a broad-reaching approach is a step beyond what’s possible, given the varying laws and approaches to different kinds of speech in each nation. But maybe, independent governments could look to implement their own Oversight Board style model for their nation/s, taking the decisions out of the hands of the platforms, and maximizing harm minimization on a broader scale.